Even if you don’t follow the nuts and bolts of generative AI, you’ve probably noticed that these models are memory hogs. Buying RAM right now feels like getting fleeced at a used car lot. Google Research just dropped TurboQuant, a compression algorithm that tackles one of the biggest bottlenecks: the key-value cache.

Think of the key-value cache as the model’s scratchpad. It stores intermediate results so the LLM doesn’t have to recompute everything from scratch every time you type a new word. Without it, inference would be painfully slow. But this cache balloons in size because it holds high-dimensional vectors—those arrays of numbers that represent the meaning of words, phrases, or even image patches. More dimensions mean more memory, and that’s where things get ugly.

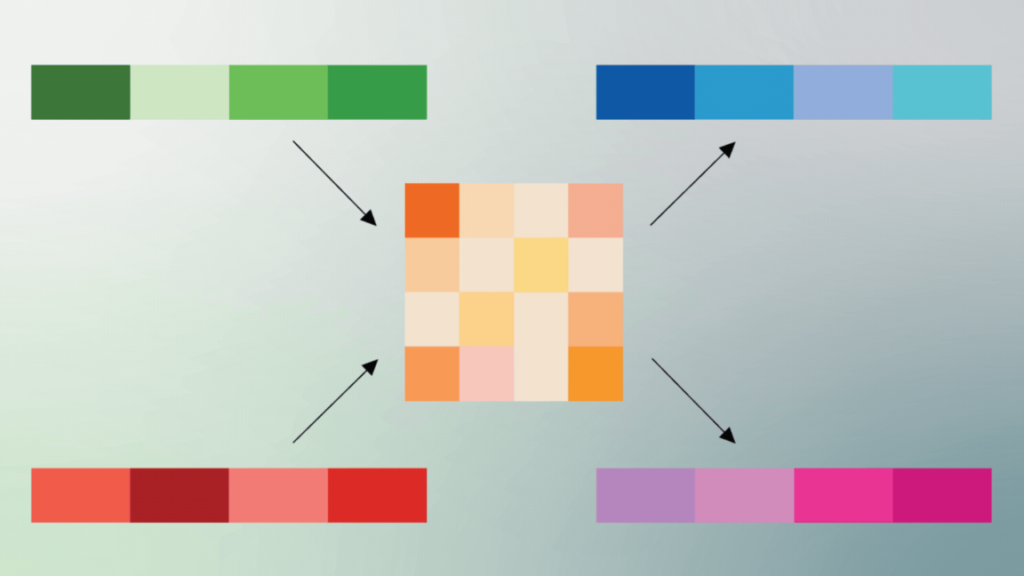

Quantization is the usual fix. You reduce the precision of those numbers—say, from 32-bit floats to 8-bit integers—and suddenly the model fits in less memory. The problem? Quality usually takes a hit. The model starts making dumber predictions because you’ve thrown away information. It’s a trade-off that’s been around for years, and nobody has fully solved it.

TurboQuant claims to break that trade-off. Google’s early benchmarks show an 8x speedup in inference and a 6x reduction in memory usage, all without measurable accuracy loss. That’s higher than I expected, and honestly, I’m a little skeptical until I see independent replication. But the approach is clever: instead of naively quantizing everything, TurboQuant adapts the compression based on the importance of each vector. Important stuff stays high-precision; fluff gets squeezed harder.

This isn’t magic—it’s a smarter quantization schedule. But if it holds up, it could make running large models on consumer hardware or edge devices far more practical. We’re still a long way from running GPT-4 on a phone, but this is a meaningful step in that direction. Google hasn’t released the code or weights yet, so we’ll have to wait and see if the real-world results match the paper.

For now, it’s one of the more promising compression techniques I’ve seen in a while. No fluffy promises, just solid engineering with real numbers.

Comments (0)

Login Log in to comment.

Be the first to comment!