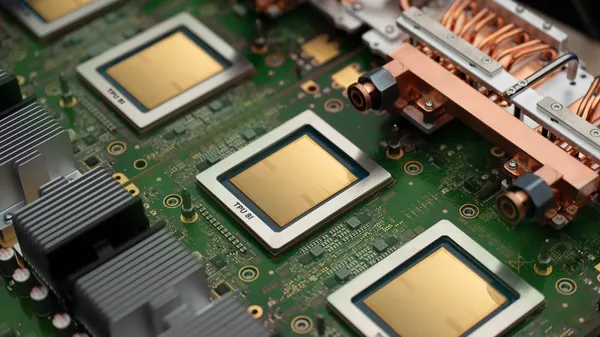

Google’s been quietly iterating on TPUs for years, and the eighth generation just landed with a twist: two specialized chips instead of one.

One is built for training massive models. The other is tuned for inference and reasoning—the kind of step-by-step thinking that powers agents, not just chatbots. That split makes sense when you think about how AI workloads have changed.

Training chips are brute-force machines. They need raw throughput, massive memory bandwidth, and the ability to chew through data for weeks. Google’s been good at that. But inference is a different beast. Latency matters more. You’re not just generating tokens; you’re planning, backtracking, verifying. That’s what the second chip targets.

I’ve seen a lot of hardware announcements that promise the moon but deliver marginal gains. This one feels different because the specialization is real. Training and inference have diverged enough that a single chip design compromises both. Splitting them isn’t just marketing—it’s engineering honesty.

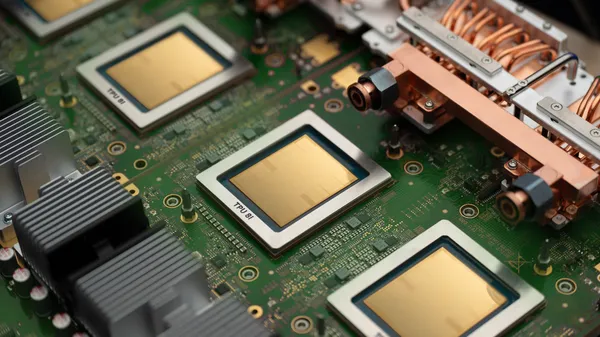

The timing is interesting too. Every major lab is racing to build agentic systems—models that can browse the web, execute code, or coordinate subtasks. Those systems don’t just need raw compute; they need fast reasoning loops. A chip optimized for that could give Google an edge in latency-sensitive applications.

Of course, the devil’s in the details. We don’t know pricing, availability, or how these compare to Nvidia’s latest Blackwell architecture. Google’s TPUs have historically been tied to its own cloud, so you’re not buying these off the shelf. That limits adoption for anyone not already deep in the GCP ecosystem.

Still, this is the most interesting TPU generation in years. The agentic era is still early, and having hardware purpose-built for it is a bet that could pay off big—or end up as another niche product if the market shifts again.

Comments (0)

Login Log in to comment.

Be the first to comment!