Google Research just published a paper that takes a hard look at a problem most of us in ML have been quietly ignoring: human disagreement.

We all know the drill. You build a model, you need to evaluate it, so you hire some raters on Mechanical Turk or a labeling platform. You give them a rubric, they label a few thousand items, and you average out the results. Maybe you do a quick Fleiss’ kappa check and call it a day.

But here’s the thing nobody likes to talk about: humans disagree. A lot. And we’ve been systematically pretending they don’t.

Flip Korn and Chris Welty from Google Research ran the numbers on this, and the results are uncomfortable for anyone who’s ever published a benchmark with 3 raters per item.

The forest vs. tree trade-off

The core question is deceptively simple. You have a fixed budget. Do you spend it on breadth (more items, fewer raters per item) or depth (fewer items, more raters per item)?

Think of it like restaurant reviews. The forest approach asks 1,000 different people to each try one dish. You get a broad sense of the place, but you have no idea if that one dish was a fluke. The tree approach asks 20 people to try the same 50 meals. You get much richer signal on each dish, but you’ve only sampled 50 items total.

Most of the AI world has been firmly in the forest camp for years. The standard is 1 to 5 raters per item. The assumption is that with enough items, the noise averages out and you find the “true” label.

That assumption, according to this research, is often wrong.

What they actually did

The team built a simulator that stress-tests thousands of different (N, K) combinations — N being the number of items, K being the number of raters per item. They ran this on real-world datasets involving subjective tasks like toxicity detection and hate speech classification.

They defined “reproducibility” as getting the same result with p < 0.05 when you run the evaluation twice. That's a pretty standard bar, but it turns out it's surprisingly hard to hit when human disagreement is high.

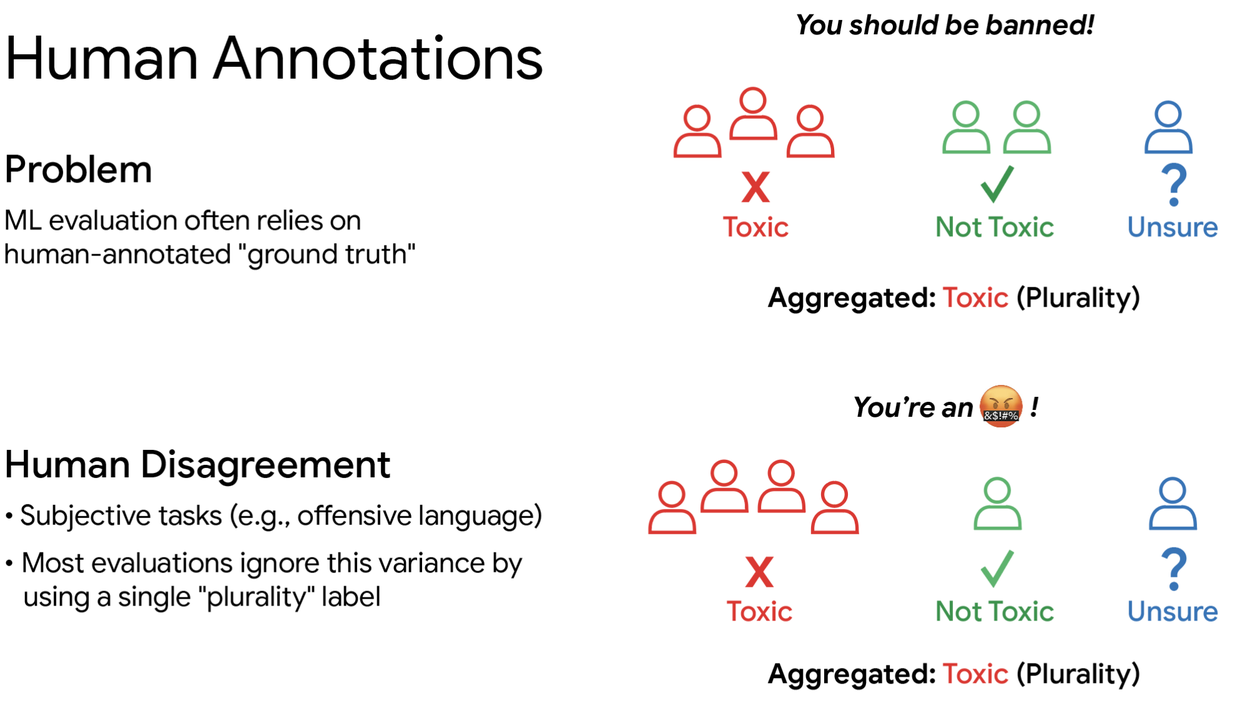

Using plurality to represent multiple ratings ignores the variation. Both examples above have the same plurality but the latter is more clearly leaning towards “Toxic”.

The key insight is that when you collapse multiple ratings into a single plurality label, you’re throwing away information. Two items can have the same plurality but wildly different levels of agreement among raters. One might be 60/40 split, another 90/10. Treating them the same is lazy and introduces noise into your benchmark.

What they found

I won’t bury the lead: the optimal configuration depends heavily on how much disagreement your task naturally generates. But there are some general patterns that emerged.

For tasks with low to moderate disagreement (like straightforward image classification), the forest approach works fine. You can get away with 3-5 raters per item and a large number of items.

But for tasks with high disagreement — and this is where it gets interesting — you need way more raters per item than anyone is currently using. We’re talking 20-50 raters per item for tasks like toxicity detection or content moderation, where reasonable people genuinely disagree on what counts as offensive.

The paper provides a framework for calculating the optimal (N, K) given your budget and the expected disagreement level of your task. They’ve also open-sourced a simulator so you can run your own numbers.

Why this matters

This isn’t just academic nitpicking. If your benchmark isn’t reproducible, it’s not really a benchmark. It’s a noisy signal that might or might not mean what you think it means.

Consider the implications for model comparisons. If Model A beats Model B on your benchmark by 2%, but your benchmark has high disagreement and low rater depth, that 2% might just be noise. Run the same evaluation again with different raters and you might get the opposite result.

This is the kind of problem that undermines trust in the entire field. We’ve all seen papers where the benchmark results look too good to be true. Sometimes they are. But sometimes the benchmark itself is just unreliable.

My take

I’ve been saying for years that we need to take human annotation variance more seriously. Most teams treat raters as interchangeable sensors that produce ground truth, when in reality they’re humans with different backgrounds, biases, and interpretations.

The Google team’s framework is a solid step forward, but it has limitations. The simulator assumes you can predict disagreement levels before you start collecting data, which is often not the case. And the budget constraints in the real world are messier than the clean (N, K) trade-off suggests.

Still, this is the kind of foundational work that should change how we build benchmarks. If you’re running evaluations on subjective tasks, read the paper. And for the love of god, stop using 3 raters per item for toxicity detection.

Comments (0)

Login Log in to comment.

Be the first to comment!